While it is known that they can lead to errors, interruptions can also be imperative in high-risk domains such as healthcare, where patient safety and medical error reduction is now paramount. Human error theory can explain the concept of error and how errors occur at different levels in an organisation.

The Harvard Medical Practice study published in the New England Journal of Medicine in 1991 created a groundswell of interest in patient safety. Though not the first study of its kind, it was the first large-scale randomised approach to patient safety. The impact of the Harvard study was felt outside the US and eventually in the UK as well. As part of government-led modernisation program, clinical governance was introduced to the British National Health Service (NHS) to promote quality, reduce risk and realise clearer lines of accountability. It was envisaged that making chief executives of NHS organisations directly accountable for maintaining quality would force them to produce better patient outcomes. Organisational learning was central to the mission of governance. It was not surprising that a flurry of related policy directives followed. 'An Organisation with a Memory' was the British Department of Health's first dedicated, strategic policy document focussed entirely on patient safety and adverse events. It was based upon advice of an expert group, which drew on human error theory and their already established application to aviation and other high-risk industries. Human error theory accepts the inevitability of error, moving organisations and individuals away from blame and towards learning, while firmly acknowledging accountability.

What is human error theory?

James Reason, Emeritus Professor of Psychology at Manchester University has explained that human error stems from the interplay of various contributory factors that exist at the level of individual performance (known as active failures), the immediate environment (or local workplace factors) and at a broader, organisational level (systems failures). Active failures include slips, lapses and mistakes. A slip is observable and unintended and not uncommon in a busy environment. Slips are essentially errors in the human automation process where there is no conscious control and a normal routine is disturbed.

Consequently, slips are potentially a part of all routine behaviours. Donald Norman reminds us that they also tend to take predictable forms, and are likely to be experienced by experts rather than novices (the latter being less able to automate), which has implications for the familiar assumption that new staff is less reliable. Experts are further compromised by the mental storage of many more pre-programmed instructions (or schemata) than their junior colleagues. A lapse is simply forgetting something. For example, a doctor knows well that a patient requires pain relief at four-hourly intervals but forgets to prescribe the analgesia at the times required. A third category of error is a mistake-an action proceeds as planned but does not achieve its intended outcome because the original plan was wrong. For example, a junior doctor may decide he doesn't need to consult his formulary for the dose of a previously un-encountered antibiotic so he chooses the wrong dose due to lack of information. The key element is the decision-he has made a judgement but it has not led to the desired outcome.

Local workplace factors are the phenomena that surround practitioners and sometimes merge to increase the likelihood of an error. They include unworkable processes, an inappropriate skill mix and poor documentation.

Systemic failures such as chronic gaps in supervision or shortfalls in maintenance are examples that will originate in human decisions but, as Professor Reason has written, they are made at a strategic level. At this level, different influences stemming from group dynamics and-as witnessed in the US challenger disaster-from structural and cultural sources such as production pressures and bureaucratic accountability, might exist. Certain upstream decisions can then lead to numerous error-producing factors downstream.

Research study

Research study

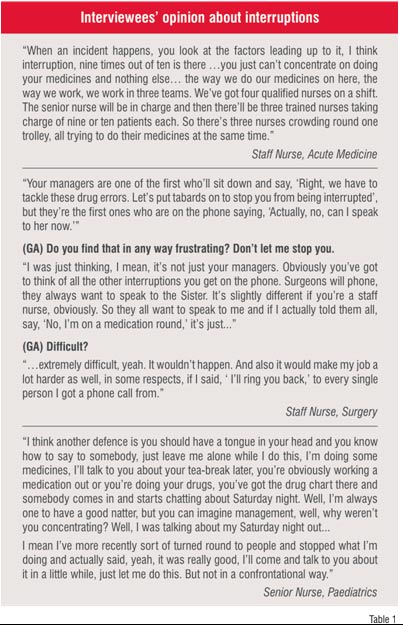

In a recently completed study, I examined the contributory factors in medication errors and their reporting in a large teaching hospital. Data were collected from a retrospective, random sample of just under 1,000 definitive drug error reports submitted over a period of five years. This was followed by 40 qualitative interviews with a volunteer and multi-disciplinary sample of health professionals. Of particular interest were the interview participants' accounts of the contributory factors in medication error from which a hierarchy of importance emerged (See Table 1). In line with much of the literature on medication errors, the participants elucidated a whole range of contributory factors. Interruptions and distractions, a relatively rare factor from the drug error report analysis, were far more prominent in the interview data. This may be related to an inclination to present written accounts in a particular style.

Some implications

Twenty four years ago, Gilbert and Mulkay examined the way scientists described their experiments. They established a clear difference in content between written reports and interview data concerning the same scientific processes. Their documentation portrayed a world firmly governed by scientific laws, where the scientist's actions are neutral. However, when interviewed about the very same experiments, they gave quite a different view of their various activities and judgements, admitting that their personal behaviours and social positions also exerted a tangible influence. They added that all sorts of variables impacted on actions.

The literature on interruptions as a contributory factor in medical error is compelling and like the factors previously discussed, is a multi-disciplinary problem. Furthermore, policy makers such as the US Institute of Medicine (IoM) have highlighted the phenomenon as significant. Interruptions lead to specific types of error usually slips, which may then contribute to errors of omission-and can be significant-such as failing to give a medication or wash one's hands.

Nevertheless, interruptions may serve a function. Donald Norman and colleagues have explained that if interrupted, the human functions of storing, retrieving and processing thoughts are usually suspended. Consequently, recovering the original activity, if a new one is introduced, can be difficult. Interestingly, Mohammed Walji and colleagues at NASA have actually argued that interruptions are critical cues in multi-tasked environments such as healthcare and even promote productivity. Yet, to avoid interruptions being seen as an accepted element of practice, Walji has identified three conditions for what might be termed as 'effective interruptions'. First, the person being interrupted must be interrupted at the right time, the task they are undertaking should not be spoilt as a consequence of interruption, and the interruption process should be carefully executed to enhance its persuasiveness. However, a notable caveat is supplied by Tucker and Edmondson who, on the basis of their multi-centred observational studies, have proposed that (ostensibly) resourceful and highly adaptable staff who normalise interruptions simply serve to hide away organisational weaknesses.

My interview data exposed another type of interruption. Categorised here as social interruption, and rather like violations in comparison to slips, the social interruption is more likely to be deliberate (or intentional) being a psychosocial rather than cognitive factor. Interruptions require two parties. Of course, the person being interrupted by a social question may not wish to be interrupted. Their inability to say 'no, not now' may similarly hide away organisational weaknesses, but may also be strongly suggestive of a lack of error wisdom. It is clear from this data that interruptions are a cause for concern. It may also be that reporting, if carefully structured, may be one means of identifying their role in causation and their effects.

So what can we do?

So what can we do?

To gain a better understanding of problems such as interruptions, which are essentially local workplace factors, we have the asset of human error theory. First, we know that interruptions can cause particular error types. This knowledge can then provide a systematic trail from the outcome-a missed medication dose-to the likely contributory factors. Consider a practitioner slip alongside a problematic way of working like giving all patient medications when one team of nurses hands over to another; a point when staff availability is probably low and interruptions are high.

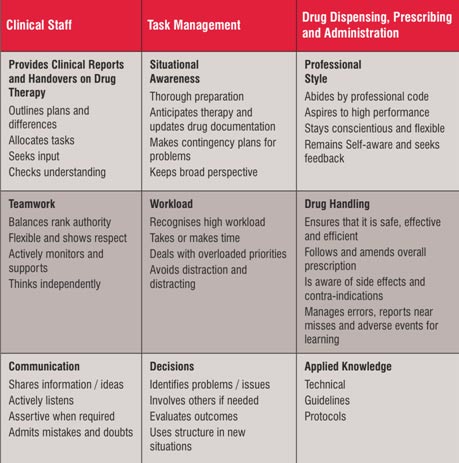

Based on the above knowledge, we could then change practice but healthcare will never be interruption-free, indeed this would be counterproductive. Lessons can be learnt from the aviation industry where interruptions are conditional. Work I carried out with a British airline has led to the development of a drug therapy skills list to combat medication errors (Table 2) stemming from a pilot skills list. It acknowledges that interruptions can be distracting and they require avoidance, especially if they cause overload for the recipient.

Effective team work also means that whatever the seniority of colleagues, they do not have an unconditional right to interrupt. As the skills list states, practitioners must be 'assertive when required'. One's professional style also demands that social interruptions do not interfere with critical activities. We cannot afford social interruptions to be normalised. This, however, demands cultural-not just procedural-change and is not easy. Effective leadership is crucial.

Interruptions are a risk worth managing, but managers may not find staff detailing the risk in incident reports, unless of course they are encouraged to think more carefully about the impact of local workplace conditions on their performance. This might be achieved through more analytic reporting tools, which we are currently developing at the Bradford Institute for Health Research. Oh! and watch out for the expert practitioners, the spontaneous demands on their expertise means they make errors too!

AUTHOR BIO:

Gerry Armitage worked as a registered nurse for 13 years in both junior and senior posts. Following this he spent a similar length of time working in higher education where he led undergraduate nursing programmes and developed new courses with the NHS, independent sector, and outside the UK. In 2007 he completed a 3 year research study funded by the Department of Health which culminated in the introduction of a drug error reporting scheme for an acute hospitals trust.