Chest X-ray radiography is commonly used for detecting life-threatening diseases but diagnosing them can be prone to errors. Computer-aided detection (CAD) using artificial intelligence techniques have shown potential in improving efficiency and accuracy. This article discusses recent advances in deep learning techniques applied to chest disease detection using radiography.

Chest X-ray (CXR) imaging is a cost-effective technique used by radiologists to diagnose various parts of the human body and detects diseases and abnormalities. The organs appear differently on the image based on the amount of radiation they absorb, with bones appearing white, the heart appearing in different shades of gray, and airways appearing black. CXR is a non-invasive, painless, and affordable tool for detecting diseases and monitoring therapy.

Chest diseases are among the most dangerous health issues globally, with lung cancer, pneumonia, tuberculosis (TB), and COVID-19 causing many deaths daily. According to the World Health Organization (WHO), thoracic diseases have a high mortality rate, causing millions of deaths every year. Early detection of these diseases can save lives.

Radiologists might face challenges in interpreting CXR images due to multiple factors such as restricted resolution, similarity between disease symptoms, and lack of experience. Computer-aided detection/ diagnosis systems (CAD) that use machine learning and deep learning algorithms have been proposed to assist radiologists in making decisions. Over the last decade, machine learning (ML) techniques have become more prevalent in medical imaging-based anomaly detection and classification, with high accuracy shown through numerous studies. ML has been used for various purposes in medical image analysis, including organ segmentation, disease detection, and classification. ML algorithms have been employed to classify several diseases such as TB, pneumonia, edema, cardiomegaly, and COVID-19.

CAD systems require large amounts of data for training and testing the AI algorithms, which are typically collected in datasets that include patient information such as age, race, sex, and insurance type. Datasets aim to advance research in detecting diseases, and deep learning (DL) techniques have proven to be efficient and achieve expert-level performance in clinical tasks when trained in large datasets. Several CXR image datasets are available, including Indiana, ChestX-ray8, ChestX-ray14, KIT, Montgomery, JSRT, Shenzhen, and CheXpert. These datasets contain various CXR images with different abnormalities, some of them have metadata and are labeled using natural language processing (NLP) algorithms.

Preprocessing X-ray images involves transforming them from their original format to a more informative and useful one to enhance their quality. CXR images are typically produced in DICOM format, which contains extensive metadata that can be difficult for non-radiology experts to understand. To make DICOM images more accessible in computer vision, they are often compressed into PNG or JPG formats using specific algorithms that preserve essential information. Preprocessing typically involves de-identifying patient information and converting DICOM images into other formats while resizing them without sacrificing crucial details. Imbalanced or low-quality datasets can be improved using augmentation, enhancement, segmentation, and bone suppression techniques, which extract meaningful information and enhance the quality of the regions of interest.

Training a deep neural network on an imbalanced dataset may result in overfitting, leading to poor generalisation and poor performance. Researchers use various data augmentation techniques, such as position-based and colour-based augmentation, to mitigate this issue. These techniques increase the number of CXR samples, resulting in better accuracy. Techniques like histogram equalisation and filtering are used to adjust parameters like contrast, brightness, noise suppression, and edge sharpness to enhance image quality, and making it more interpretable. Several studies have explored different enhancement techniques, including Gabor filters, contrast-limited adaptive histogram equalisation, unsharp masking, and gamma correction.

Also, image segmentation is used to divide CXR images into regions of interest (Figure 1), and various deep learning models like U-Net, FCN, pix2pix, and ARSeg have been used for this task. Segmentation improves the performance of deep convolutional neural networks (DCNN) for chest disease detection and classification. (Figure 1)

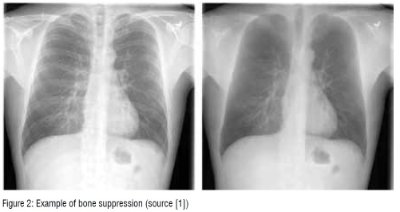

Bone suppression is also popular. This technique removes bones from CXR images to enhance visibility and prevent overlap with disease signs (Figure 2). Researchers have studied bone suppression using different methods, including using image filtering, gradient differences, or separating bone structures from soft tissue using a DCNN model. Bone suppression has been shown to improve the performance of deep models for detecting various diseases, including lung cancer and COVID-19. (Figure 2)

Deep learning for chest disease detection

Various CAD systems have been developed to detect chest diseases using different techniques. Early detection of thoracic conditions is crucial for successful treatment as diseases such as pneumonia, pulmonary nodule, TB, COVID-19 can become more severe when they are advanced. In CXR images, three types of abnormalities can be observed: texture abnormalities, focal abnormalities, and abnormal form.

Detecting pneumonia through CXR images can be challenging for radiologists due to the possibility of pneumonia being confused with other diseases. To avoid misdiagnosis, various methods have been developed, including the use of different DL architectures. One study proposed the Swin transformer model, which incorporated image enhancement and data-augmentation techniques and achieved high accuracy on two popular datasets. Another study utilised an attention mechanism-based DCNN model to classify CXR images as normal or pneumonia with high accuracy. Additionally, two DCNN models with transfer learning were implemented by other researchers, achieving high accuracy in binary classification of pneumonia cases. The CheXNet model, a 121-layer convolutional neural network, was developed by a different study and achieved high performance in detecting and localising pneumonia. More recently, researchers proposed a CAD system based on transfer learning with an ensemble learning of three different DCNN models. Various methods were used in other studies, such as customised CNN model, and different data-augmentation techniques, to overcome overfitting and achieve high accuracy in pneumonia detection.

Lung cancer is viewed by the WHO as a very serious condition, especially for men. It is the most frequent type of cancer in men and the third most common in women. Early diagnosis of lung nodules, which are a manifestation of the disease, is crucial to ensure effective treatment. To evaluate the efficacy of deep learning (DL) systems in assisting radiologists in detecting pulmonary nodules in medical images, multiple studies have been conducted. DL algorithms have been shown to perform well on different types of medical imaging, particularly X-ray radiographs. For instance, one study demonstrated that the performance of 12 radiologists in detecting malignant pulmonary nodules increased by more than 5 per cent when aided by a DL system. Likewise, another study reported that a ResNet-based model outperformed six radiologists in detecting operable lung cancer. Multiple recent studies have reported high accuracy and high sensitivity rates for DL models in detecting lung nodules in CXR images.

WHO ranks tuberculosis (TB) as one of the top 10 fatal diseases, with TB being the second deadliest infectious disease after COVID-19, and ahead of HIV/AIDS. In 2020, approximately 10 million persons were diagnosed with TB worldwide, with 1.1 million being children. Tuberculosis is an infection caused by a bacterium that primarily affects the lungs. It can be transmitted through the air when infected individuals cough or sneeze. Early detection of TB is essential, and DL has been used to detect TB from CXR images. Several approaches have been proposed, such as TBXNet, VGG16-CoordAttention, ConvNet, and an ensemble learning method using AlexNet and GoogleNet. These approaches achieved high accuracy rates ranging from 87.0 per cent to 99.75 per cent and were tested using different datasets. These DL methods offer a promising tool for the early detection of TB.

The emergence of COVID-19 in 2019 led to a pandemic that has resulted in millions of deaths worldwide. Traditional clinical techniques for detecting the virus are expensive and time-consuming, but the use of CXR images has shown promise in detecting and monitoring the effects of COVID-19 on lung tissue. DL algorithms were used to classify CXR images as either normal, pneumonia, or COVID-19. Initially, researchers faced a challenge due to a lack of CXR images for positive cases, but open access CXR datasets with COVID-19 cases were eventually created. To improve the performance of DL models, techniques such as transfer learning, fine-tuning, and data augmentation have been utilised. Some models use multiple steps to detect the presence of pneumonia and distinguish between pneumonia and COVID-19. Several models have achieved high levels of accuracy, ranging from 93.94 per cent to 99.63 per cent.

In some cases, patients may suffer from multiple diseases simultaneously, which can increase the risk to their life. Detecting multiple pathologies using CXR images can be difficult for radiologists due to the similarity of disease symptoms. To overcome this challenge, various deep learning (DL) models have been proposed. These models have achieved high accuracy in detecting various chest diseases, including pulmonary nodules, pleural effusion, cardiomegaly, and other types of abnormalities. Some of these models use weak-supervised methods, LSTM-based approaches, ensemble learning, and attention mechanisms to improve performance. The best-performing models have achieved high AUC scores ranging from 73.00 per cent to 94.89 per cent. Other models have used cascade neural networks and multiple DCNN models to classify multiple diseases with an average AUC of 79.50 per cent to 85.37 per cent. EfficientNet-V2M and Xception models have also been used to classify CXR images into different classes with high accuracy.

Medical image analysis using deep learning has emerged as a promising research area at the intersection of medicine and computer science, offering a variety of methods and solutions for predicting and preventing diseases.

Initially, ML algorithms were utilised to automatically detect diseases in medical images and showed potential in small datasets. However, the process of anomaly detection using ML involves several technical components, such as manually extracting features and the intervention of specialists, which can hinder performance on large datasets.

DL techniques have proven effective, particularly with large datasets and high computation resources, which overcome the limitations of ML. Residual Networks have shown potential in effectively classifying various medical conditions, especially in situations where data is limited. However, DL models are considered black boxes, making it challenging to interpret their performance. To address this issue, researchers are developing explainable approaches for disease detection to output interpretable results for radiologists by providing reports and heatmap visualisation. The purpose of AI and DL techniques is to collaborate with radiologists in order to enhance performance and expedite the diagnostic process.

This article summarises research in several areas, including the most widely used X-ray datasets, preprocessing techniques, and recent DL architectures, with a particular focus on chest disease classification.

Additional details about this topic can be found in the following open access paper:

Source [1] A. Ait Nasser and M. A. Akhloufi, “A Review of Recent Advances in Deep Learning Models for Chest Disease Detection Using Radiography,” Diagnostics, vol. 13, no. 1:159, Jan. 2023, https://doi.org/10.3390/diagnostics13010159 .